Introduction

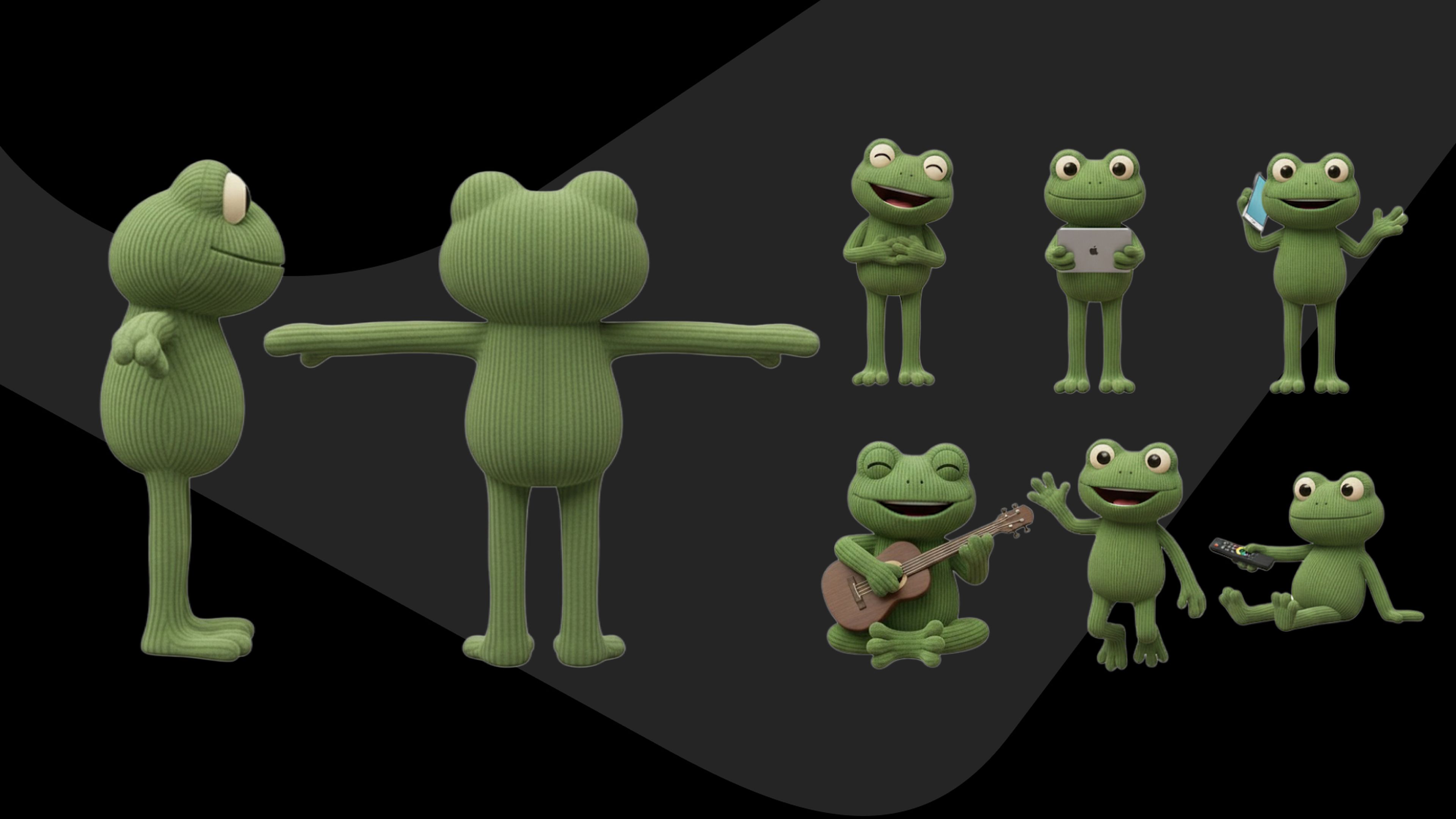

Over the past months we’ve tackled diverse AI video projects like social assets for Old Captain Rum, interactive videos for Rituals and an intro for the Lurni AI platform. But recently, we took on our most ambitious challenge yet: creating a complete campaign for Overstappen.nl including a TV commercial, digital stills, and social assets. Entirely created with AI.

The commercial went live on Dutch TV this month, and now we want to take you behind the scenes. This is the full story of our process, from initial character concept to final broadcast, including the tools we used, the obstacles we overcame, and the crucial lessons we learned.